I’ve been dabbling in modular for quite a while, but up until recently I mostly treated my system as a collection of independent monosynth voices. Lately I’ve started taking modular much more seriously, and my goal has shifted toward building a performance-oriented system capable of generating full, semi-generative tracks — IDM, acid, techno, etc.

What I’m aiming for is less of a traditional “play notes on a synth” workflow and more of a living system where sequencing, modulation, probability, and interaction drive the music forward. I want the patch to behave like an ecosystem — patterns evolving, rhythms mutating, voices influencing each other — rather than a set of isolated voices running static loops.

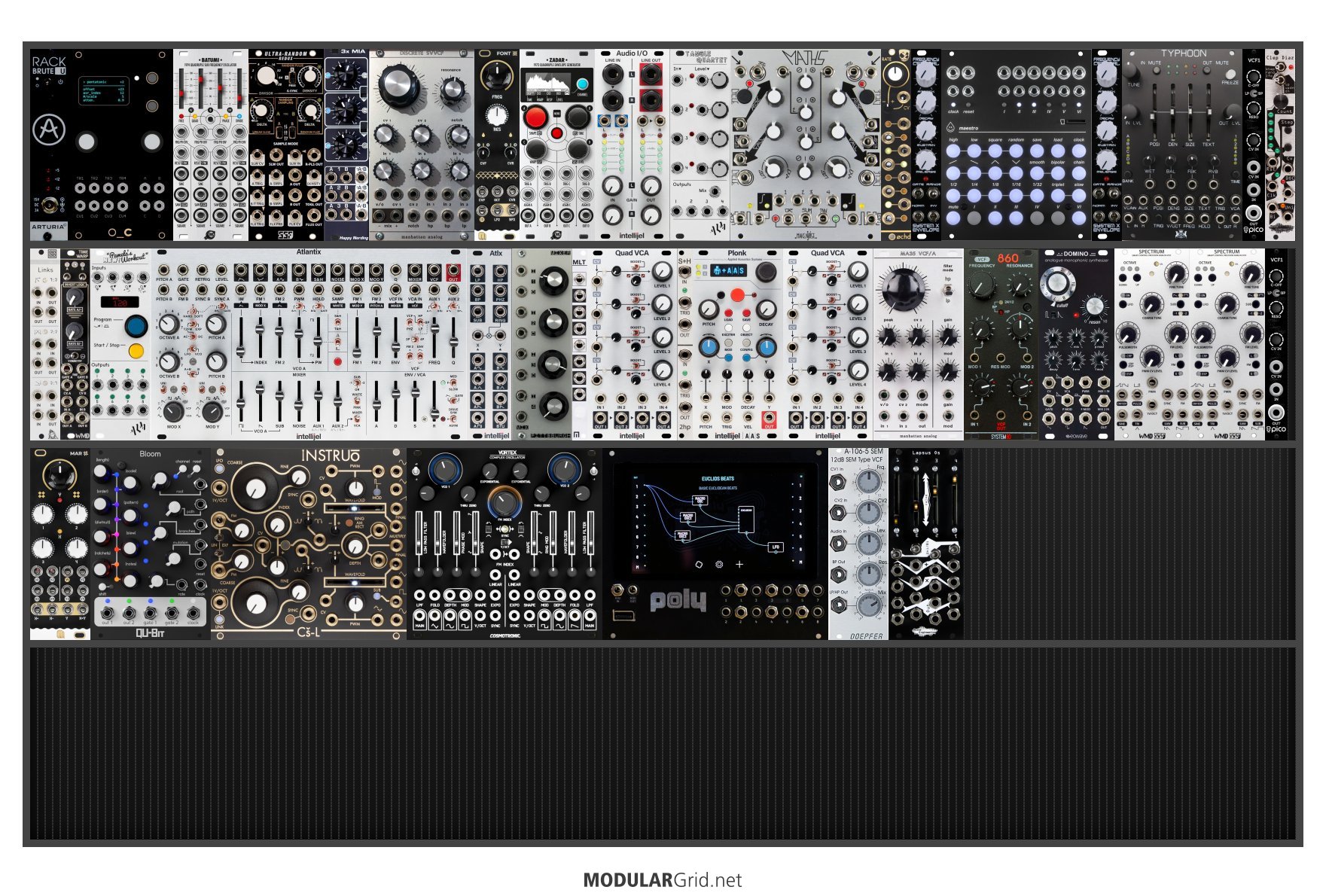

As you can see, I already have a wide range of sound sources, but I suspect what I really need now is more plumbing — routing, modulation infrastructure, logic, interaction, and system-level control. The RackBrute sits horizontally at the base of the Mega Rack and functions as a kind of control surface. That’s where I keep most of my logic and trigger processing. I’m still very much learning, but I’m trying to move toward a setup where everything interacts dynamically instead of operating independently.

I’d love to hear ideas from people who think in terms of systems rather than individual modules. What would help transform this into a more cohesive, interacting ecosystem? Where are the likely weak points? What kinds of “plumbing” tend to unlock the most potential in large, performance-focused modular systems?

For recording, I plan to capture evolving takes as stems into a Bluebox and then develop them further outside the rack.

I’m approaching this with a beginner’s mindset and fully aware there’s a lot I don’t yet understand, so I’d really appreciate any guidance, architectural thoughts, or suggestions on how to make the system more cohesive, playable, and alive.

Here is the rack brute that sits at the base of the mega rack:

For some reason, the thumbnail of the mega rack is not loading correctly so youll have to click it for accuracy.

Any help or advice would be greatly appreciated.